XMANAI partner spotlight – Suite5

Name: Dr. Fenareti Lampathaki

Job title: Technical Director

Organization: Suite5 Data Intelligence Solutions Limited

Bio: Fenareti Lampathaki (female) holds a Ph.D. Degree and a Diploma – M.Eng. Degree in Electrical and Computer Engineering (Spec.: Computer Science) from the National Technical University of Athens (NTUA) and M. Sc. Degree in Techno-Economics (M.B.A.).

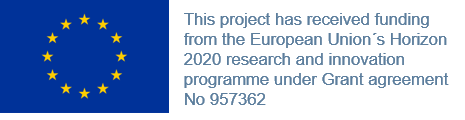

XMANAI Reference Architecture: Perspectives

The XMANAI reference architecture aims at providing the basis for the detailed specification, development and integration activities of the overall XMANAI platform along its Data and AI related Services Bundles, its XAI Algoritmms and Models Catalogue, and the different Manufacturing Apps.

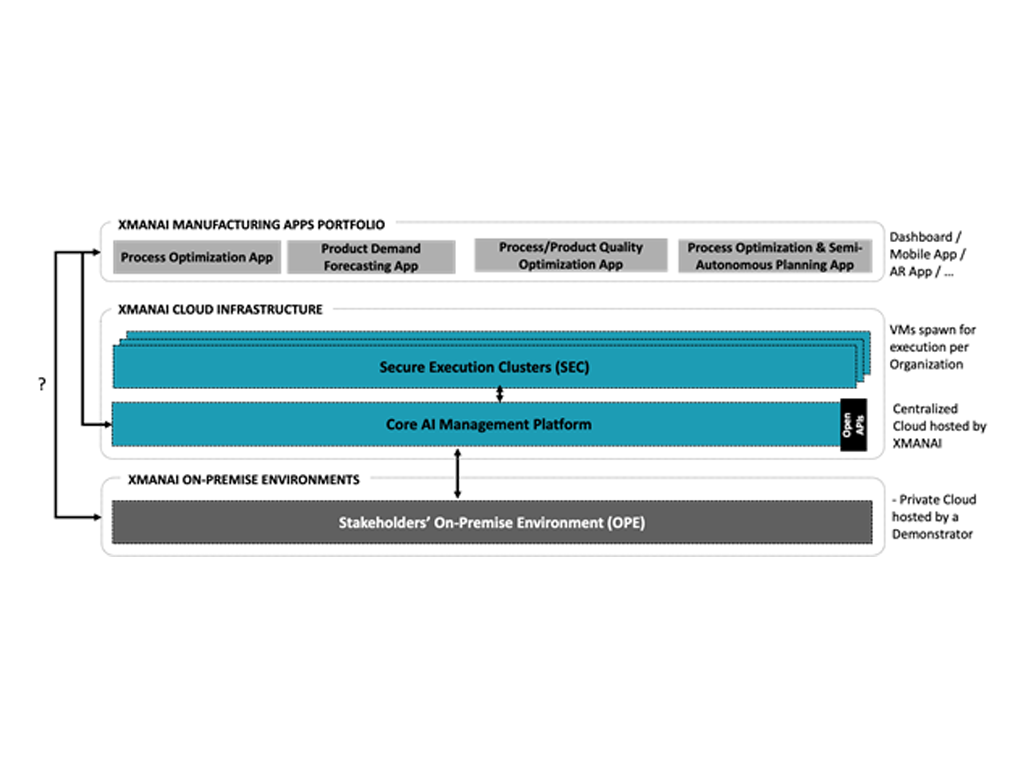

Security Aspects of Industrial Data Management

In the new digital world where tremendous amount of information is generated from the increasing number of data sources, the emerging need for security and privacy techniques, methods and solutions has been raised.

XMANAI partner spotlight – AiDEAS

Name: Dr Serafeim Moustakidis

Job title: Co-founder / CTO

Organization: AiDEAS

Bio: Dr Serafeim Moustakidis has wide experience in computational intelligence, machine learning and data processing with more than 12 years of research experience in various fields.

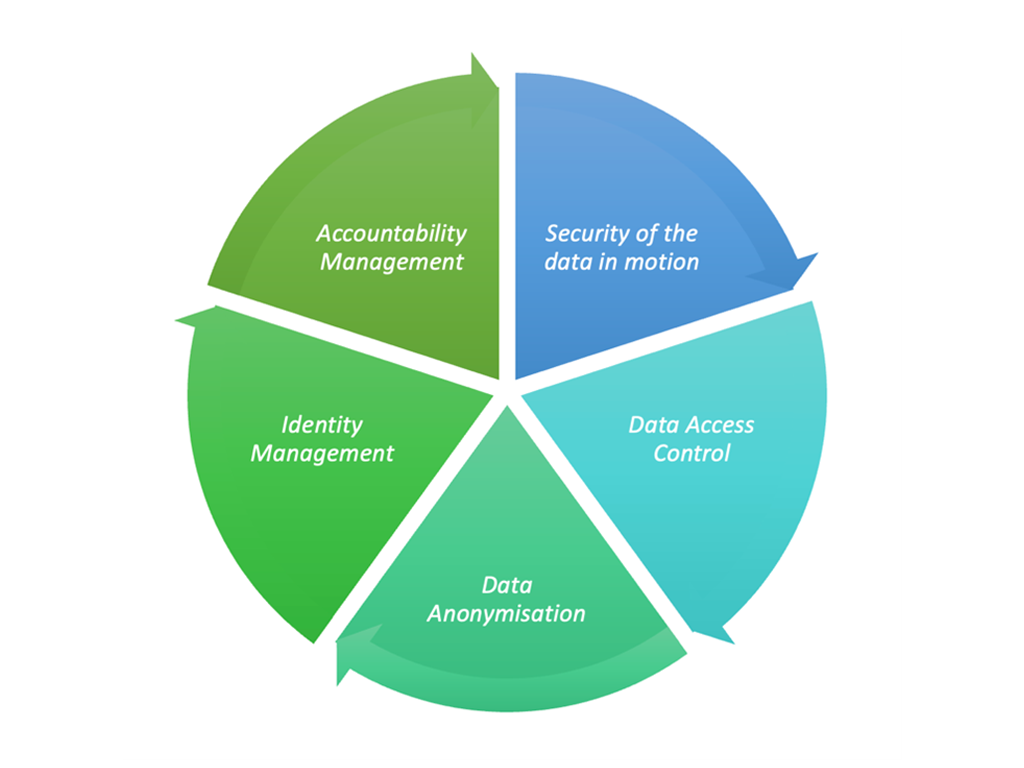

What is the XMANAI Minimum Viable Product (MVP)?

In XMANAI, the exploration of the Explainable AI landscape, the analysis of the business requirements from its 4 demonstrators and the elicitation of the technical requirements have culminated with the definition of the Minimum Viable Product (MVP).

XAI in Manufacturing

The implementation of Artificial Intelligence (AI) in the manufacturing domain enables higher production efficiency, and outstanding performance.

Data Asset Management in XMANAI

The management of assets in XMANAI should meet a number of critical requirements. One of them is the explainability of data, since Explainable AI is the main objective in the project.

XMANAI partner spotlight – Tyris AI

Name: Dr. David Monzo

Job Title: Director of AI

Organization: Tyris AI:

Bio: Dr. David Monzo is the technical director and main investigator at Tyris AI.

XMANAI partner spotlight – Politecnico di Milano

XMANAI partner spotlight – Politecnico di Milano Q: What is your organisation’s role in XMANAI? A: POLIMI is leading WP1 (Scientific Foundations) especially addressing Human Factors in the interaction with AI-based Autonomous Systems. The Collaborative Intelligence paradigm from Harvard Business Research will be applied, modelled in the industrial pilots, so that to become validated as […]

Education role in AI technology implementation in industry

Artificial Intelligence has a crucial role in the digital transformation roadmap of traditional manufacturing companies as if from one side it may bring great step improvement in several areas, on the other side it is probably the most difficult technology to be implemented in a sustainable way, due to the lack of knowledge and to the natural negative reaction to adoption that this type of technology generates in the involved people.

XMANAI partner spotlight – Fraunhofer FOKUS

Fraunhofer FOKUS is the leader of the working package “Asset Management Bundles Methods and System Designs”. In this working package, management and sharing methods are defined and prototypically implemented for the assets. The assets mentioned are industrial data as well as AI models and analyses based on these data.

AI Requirements for Manufacturing in the XMANAI Project

The XMANAI project is working to provide the tools to navigate the Artificial Intelligence (AI)’s “transparency paradox”, designing, developing and deploying a novel Explainable AI Platform powered by explainable AI models that inspire trust, augment human cognition and solve concrete manufacturing problems with value-based explanations.

Have you ever heard about Graphical Neural Networks?

XMANAI is one of the few European projects focusing on eXplainable Artificial Intelligence (XAI) methodology. However, XMANAI doesn’t use only XAI to analyze deeper and efficient the data but also introduces many other novel methodologies to provide better data insights. Have you ever listen about Graphical Neural Networks?

A brief overview of XAI Landscape

The field of explainable AI is thriving with interesting solutions, showing the potential to address almost any task in any given setting. This outburst of methods and models comes in response to interpretability being identified as one of the key factors for AI solutions to be trusted and widely deployed.

XMANAI partner spotlight – TXT e-solutions

TXT is the coordinator of the project and the exploitation leader carrying inside XMANAI its competence about industry 4.0 from Industrial & Automotive business unit and the technical competence of an end-to-end Large Enterprise provider of consultancy, software services and solutions, supporting the digital transformation of customers’ products and core processes.