BLOG

XAI Applications in the Direct to Consumer (D2C) market. The Whirlpool use cases Whirlpool Corporation is the world’s leading kitchen and laundry

XMANAI Provenance Engine: Tracking data provenance and data lineage What is Data Provenance and Why is it Important? Data provenance is akin

The XMANAI Platform and Tools Package

The XMANAI platform and Tools Package After the successful launch of the Beta Version, the XMANAI team is thrilled to announce the

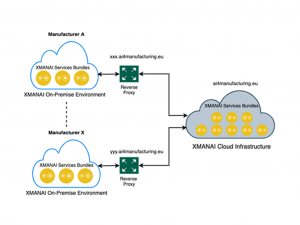

XMANAI On Premise Environment

XMANAI On-premise execution and visualization environment: A Deep Dive into the Final Release Version The XMANAI platform offers a cutting-edge approach to

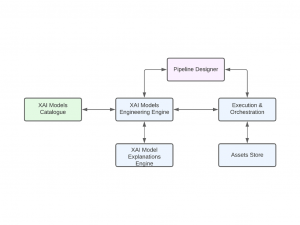

Models Catalog Component of the Platform

Models Catalog Component of the Platform In the dynamic realm of Artificial Intelligence (AI) applied to manufacturing, XMANAI (eXplainable Manufacturing Artificial Intelligence)

Explaining Transformers

Explaining Transformers Transformers are neural network architectures that have delivered performant solutions in several fields including Natural Language Processing (NLP), computer vision

Archives

- Knowledge Transfer and Replication Roadmap

- XMANAI Scientific Highlights and Publications

- X-by-Design

- XAI for Metrology at UNIMETRIK: Smart Semi-autonomous Hybrid Measurement Planning

- Towards Process and Production Optimisation with XAI:The CNH Use Cases

- Holistic Production Overview using XAI technologies: The Ford use cases

- XAI Applications in the Direct to Consumer (D2C) market. The Whirlpool use cases

- XMANAI Provenance Engine: Tracking data provenance and data lineage

- The XMANAI Platform and Tools Package

- XMANAI On Premise Environment

- Models Catalog Component of the Platform

- Explaining Transformers

- How are the final MVP features contributing to the X-By-Design Concept?

- The Power of X-by-design: Pioneering Transparency and Explainability through Design

- Hackathon Event: Paving the Way to Transparent AI in Manufacturing with XMANAI

- Intelligent ETL Solutions for XMANAI: API- and File Data Harvester

- XMANAI Centralized Models Execution and Visualization Environment

- Industrialization Approaches to Explainability and the XMANAI Cases

- Ethics considerations for manufacturing XAI: the XMANAI Ethical Evaluation Framework

- Partner Spotlight – Ford Motor Company

- XAI Model Guard: The XMANAI AI Models Security Framework

- Explainable AI: a key to trust and acceptance of AI-based decision support systems

- Partner Spotlight – CNH Industrial

- Industrial Asset Graph Modelling in XMANAI

- XMANAI Validation Environment for AI Models

- zExplAIn, Improving Manufacturing Processes with Explainable AI

- XMANAI partner spotlight – Deep Blue

- Technical and Socio-Business assessments of AI Maturity in pilots of Explainable AI

- XMANAI partner spotlight – UNIMETRIK

- AI Algorithms Lifecycle Management and Collaboration

- XMANAI partner spotlight – Knowledgebiz

- XMANAI partner spotlight – UBITECH

- A First Glimpse into the XMANAI platform

- XMANAI partner spotlight – Whirlpool

- Overview requirements

- XMANAI partner spotlight – Athena Research Center

- Industrial Assets Provenance in XMANAI

- XMANAI partner spotlight – Innovalia Association

- The Draft Catalogue of XMANAI XAI Models

- XMANAI´s Evaluation Framework

- XMANAI partner spotlight – Suite5

- XMANAI Reference Architecture: Perspectives

- Security Aspects of Industrial Data Management

- XMANAI partner spotlight – AiDEAS

- What is the XMANAI Minimum Viable Product (MVP)?

- XAI in Manufacturing

- Data Asset Management in XMANAI

- XMANAI partner spotlight – Tyris AI

- XMANAI partner spotlight – Politecnico di Milano

- Education role in AI technology implementation in industry